From Punch Cards to Precision: The History of NC and CNC Machinery

1. The Birth of Numerical Control (NC)

The origins of numerical control (NC) date back to the late 1940s and early 1950s, when the U.S. Air Force sought more precise and repeatable methods for manufacturing complex aircraft parts. John T. Parsons, often credited as the father of NC, collaborated with MIT to develop a system that used punched cards to control machine tools. This innovation marked the beginning of programmable manufacturing.

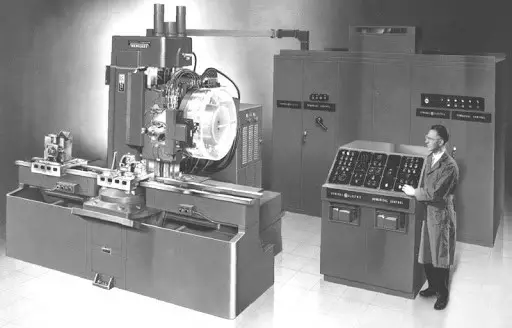

2. Early NC Machines and Their Limitations

The first NC machines were retrofitted milling machines controlled by paper tape. These systems allowed for automated movement along multiple axes, but they were limited by their reliance on analog electronics and cumbersome input methods. Programming was tedious, and any error in the tape could halt production.

3. The Rise of Digital Control

By the 1960s, digital computers began replacing analog systems in NC machines, giving rise to Computer Numerical Control (CNC). This shift allowed for more complex operations, easier program storage, and faster processing. CNC machines could now interpret G-code — a standardized language that tells machines how to move.

4. The 1970s: CNC Goes Commercial

As computing power became more affordable, CNC machines entered mainstream manufacturing. Companies like Fanuc, Siemens, and GE developed controllers that could be integrated into lathes, mills, and grinders. This decade saw CNC move from aerospace R&D labs into automotive, defense, and general machining shops.

5. The 1980s: CAD/CAM Integration

The 1980s brought a revolution in design-to-production workflows. Computer-Aided Design (CAD) and Computer-Aided Manufacturing (CAM) software allowed engineers to design parts digitally and generate CNC code directly from those models. This integration reduced errors, improved design flexibility, and shortened lead times.

6. The 1990s: PC-Based CNC and Open Architecture

With the rise of personal computers, CNC systems began shifting from proprietary hardware to PC-based platforms. Open architecture allowed manufacturers to customize their control systems, integrate sensors, and connect machines to broader networks — laying the groundwork for smart factories.

7. The 2000s: Automation and Multi-Axis Machining

As CNC matured, machines became more capable. Multi-axis machining centers (like 5-axis mills) enabled the production of highly complex geometries in a single setup. Automation systems, including robotic arms and pallet changers, further increased throughput and reduced labor costs.

8. The 2010s: IoT and Smart Manufacturing

The Industrial Internet of Things (IIoT) brought real-time monitoring, predictive maintenance, and cloud connectivity to CNC environments. Machines could now report their status, track tool wear, and optimize cycle times autonomously — a major leap toward Industry 4.0.

9. Today: AI, Digital Twins, and Hybrid Manufacturing

Modern CNC systems are increasingly powered by artificial intelligence. AI algorithms optimize tool paths, predict failures, and even adapt to material inconsistencies in real time. Digital twins — virtual replicas of machines and processes — allow for simulation, training, and remote diagnostics. Additive manufacturing (3D printing) is also being integrated with CNC to create hybrid systems capable of both subtractive and additive operations.

10. Conclusion: Precision Meets Intelligence

From punched tape to predictive analytics, the evolution of NC and CNC machinery reflects the broader arc of technological progress. What began as a quest for repeatability in aerospace has become a cornerstone of modern manufacturing. As AI and automation continue to evolve, CNC will remain at the heart of precision engineering — smarter, faster, and more adaptable than ever.